InfiniBand vs. RoCE for AI Training

InfiniBand matters for distributed training across 16+ GPUs. For single-node workloads, standard networking is fine. This guide …

Blog

Technical guides, platform updates, and engineering insights from the team.

GPU cloud providers fall into three categories: owners who control their data centers and hardware, hardware owners who use colocation, and aggregators who resell third-party capacity. The ownership model directly affects pricing stability, SLAs, and support accountability for production AI workloads.

Read article →

InfiniBand matters for distributed training across 16+ GPUs. For single-node workloads, standard networking is fine. This guide …

Why HPC teams want SLURM semantics even when they have Kubernetes, and how to get both on Nebius AI Cloud

How to run NCCL all_reduce benchmarks to verify your GPU cluster's interconnect performance before running production training.

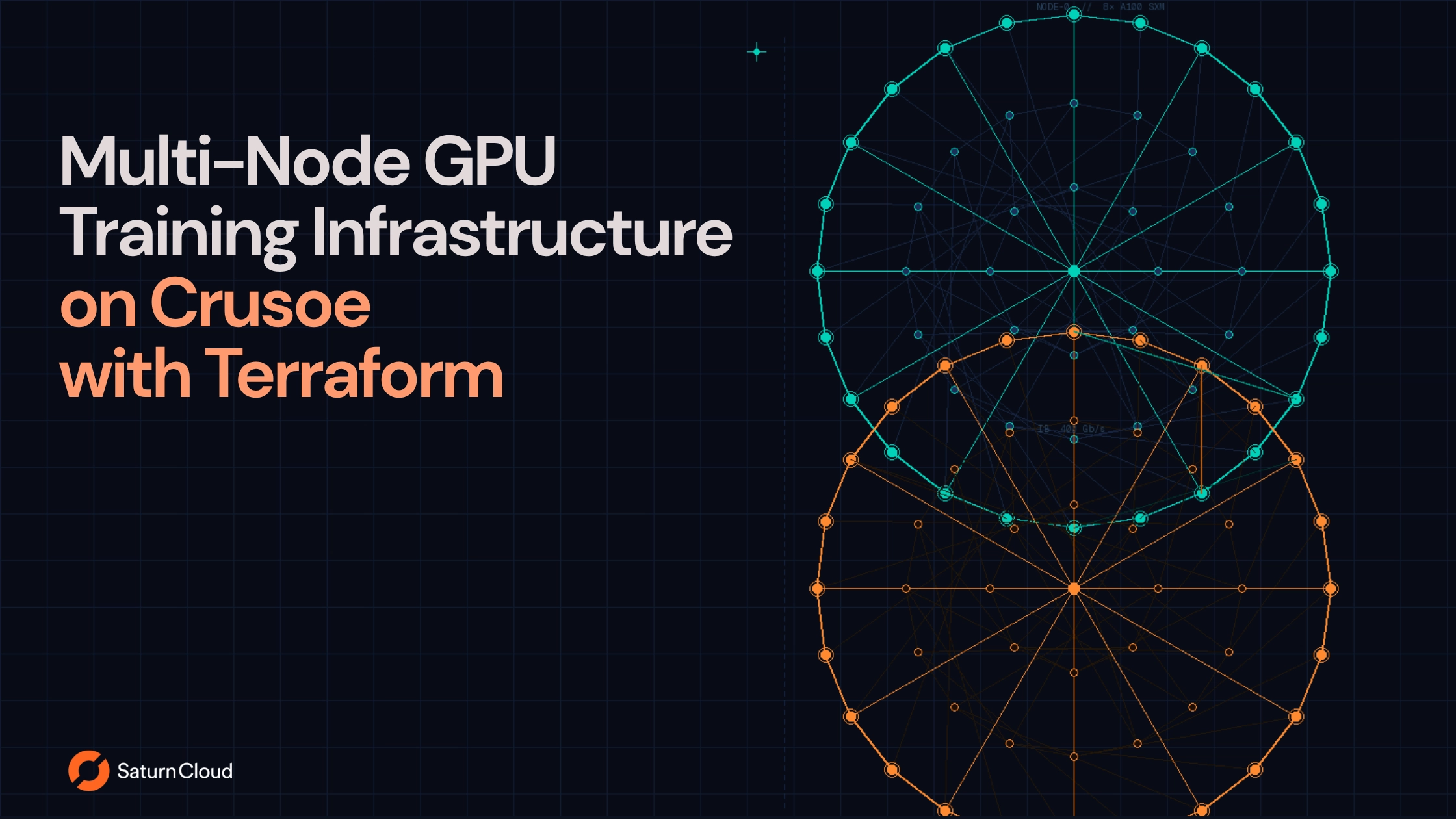

Provisioning multi-GPU clusters with InfiniBand and NVLink using the Crusoe Terraform provider for distributed training workloads.

How to deploy Saturn Cloud on Crusoe for teams that need H100, H200, and GB200 GPUs without hyperscaler quota constraints.

Practical answers to the questions you'll have when provisioning InfiniBand-connected GPU clusters on Crusoe.