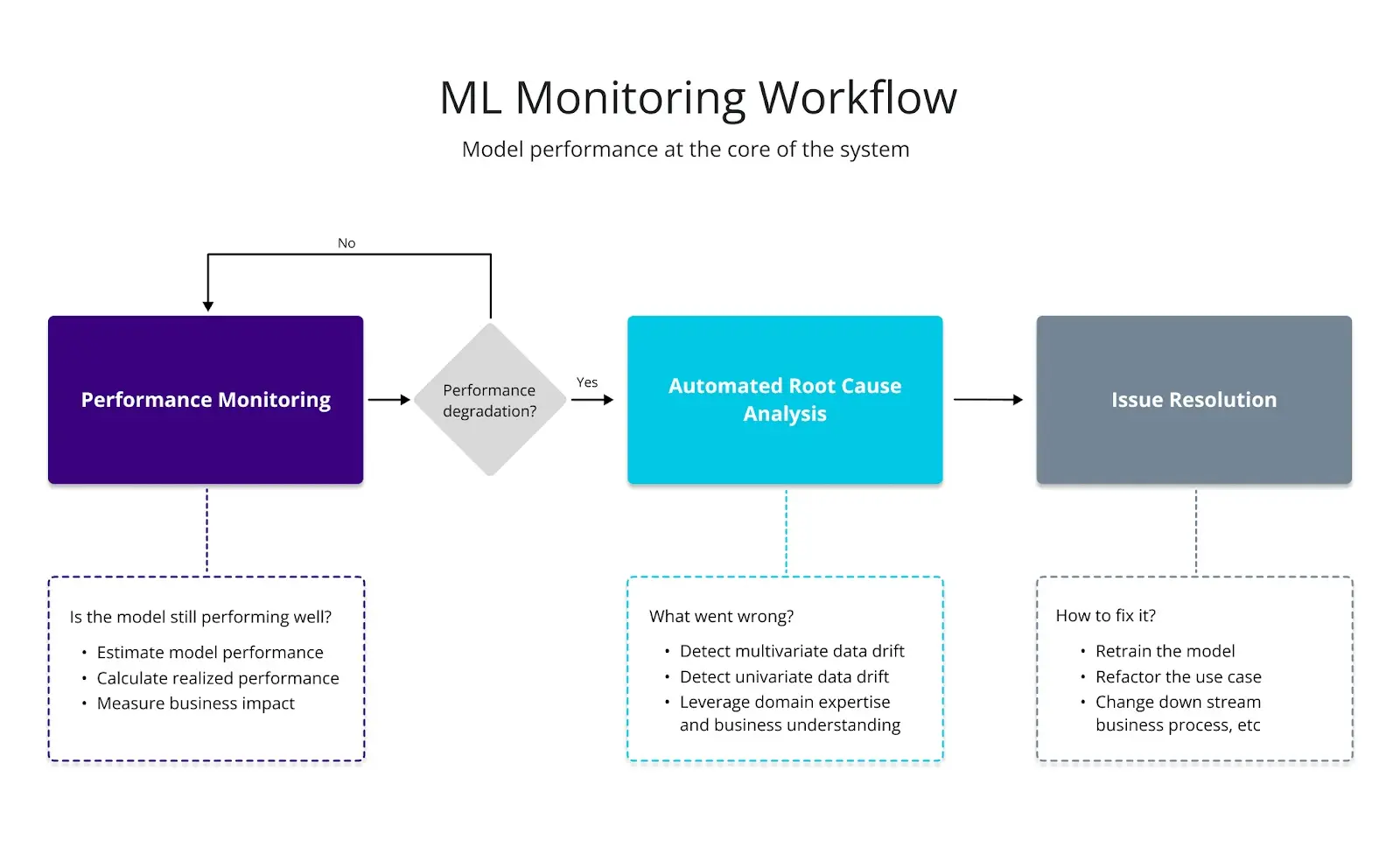

Model monitoring is the process of tracking the performance of a machine learning model in real-time and making adjustments as needed to ensure that the model continues to perform accurately and reliably over time.

(Image source: NannyML)

Some key terms and concepts related to model monitoring include:

Model drift: a common issue in model monitoring where the data distribution shifts over time, leading to a decrease in the accuracy and reliability of the model

Feature drift: a type of model drift where the statistical properties of the input features change over time

Concept drift: a type of model drift where the relationship between the input features and the output labels changes over time

Data quality: a key factor in model monitoring that involves ensuring that the data being used to train and evaluate the model is accurate, consistent, and representative of the real-world data

Performance metrics: measures used to evaluate the performance of the model, such as accuracy, precision, recall, and F1 score

Thresholds: predefined values that trigger alerts when the performance metrics fall below or exceed a certain threshold

Automated monitoring: using software tools and systems to automate the process of model monitoring and trigger alerts when issues are detected

Manual monitoring: the process of manually checking the performance of the model on a regular basis to detect any issues or trends.

Overall, model monitoring is a critical step in the machine learning workflow, as it helps to ensure that the model remains accurate and reliable over time. By monitoring for model drift, feature drift, and concept drift, and by using appropriate performance metrics, thresholds, and monitoring techniques, developers can ensure that their models continue to perform well in real-world use cases.